Best Deep Learning Software Shortlist

Harnessing the power of artificial intelligence, deep learning software is your tool for solving intricate business problems. By leveraging high-performance computing frameworks and tutorials, even a startup can build convolutional and recurrent neural networks (RNN), enabling transformative image recognition capabilities.

Such an AI platform facilitates modularity with independent modules and predictive analytics, becoming a cornerstone for data mining efforts. Its "no code" features and handling big data problems using Spark make it easy to use while keeping your CPU usage efficient. Working with nodes or learning applications like Javascript has never been more straightforward, enabling you to easily navigate the world of regression and predictive analytics.

Why Trust Our Software Reviews

We’ve been testing and reviewing software since 2023. As tech leaders ourselves, we know how critical and difficult it is to make the right decision when selecting software.

We invest in deep research to help our audience make better software purchasing decisions. We’ve tested more than 2,000 tools for different tech use cases and written over 1,000 comprehensive software reviews. Learn how we stay transparent & our software review methodology.

Best Deep Learning Software Summary

This comparison chart summarizes pricing details for my top deep learning software selections to help you find the best one for your budget and business needs.

| Tool | Best For | Trial Info | Price | ||

|---|---|---|---|---|---|

| 1 | Best for experiment-driven machine learning development | Not available | From $39/user/month (billed annually) | Website | |

| 2 | Best for automated data labeling and annotation in AI | Not available | From $49/user/month | Website | |

| 3 | Best for managing, automating, and accelerating ML workflows | 14-day free trial | Pricing upon request | Website | |

| 4 | Best for leveraging powerful GPU-accelerated AI and Deep Learning tools | Not available | From $0.09/GPU/hour (billed monthly) | Website | |

| 5 | Best for symbolic and numerical computation in deep learning | Not available | From $25/user/month (billed annually) | Website | |

| 6 | Best for quick prototyping and production of neural networks | Not available | Pricing upon request | Website | |

| 7 | Best for accessing large-scale, diverse human-annotated datasets | Not available | Customized price upon request | Website | |

| 8 | Best for advanced algorithm development with extensive libraries | Free demo available | Pricing upon request | Website | |

| 9 | Best for improving learning outcomes with AI-driven adaptive learning | Not available | Pricing upon request | Website | |

| 10 | Best for easy integration of machine learning into business operations | Not available | From $59/user/month | Website |

-

Site24x7

Visit WebsiteThis is an aggregated rating for this tool including ratings from Crozdesk users and ratings from other sites.4.7 -

GitHub Actions

Visit WebsiteThis is an aggregated rating for this tool including ratings from Crozdesk users and ratings from other sites.4.8 -

Docker

Visit WebsiteThis is an aggregated rating for this tool including ratings from Crozdesk users and ratings from other sites.4.6

Best Deep Learning Software Reviews

Below are my detailed summaries of the best deep learning software that made it onto my shortlist. My reviews offer a detailed look at the key features, pros & cons, integrations, and ideal use cases of each tool to help you find the best one for you.

Comet is on my list because it’s one of the few AI platforms built for deep, experiment-driven workflows. When I see teams scaling up deep learning experiments, tracking, comparing, and visualizing results in detail, this is where Comet fits in.

What I really appreciate is how it manages experiment metadata, model versions, and code changes all in one place. It’s especially useful when you need to reproduce results across large, fast-moving projects.

Comet’s Best For

- Data science teams managing high-volume experiment tracking

- Organizations focused on reproducible, experiment-driven ML workflows

Comet’s Not Great For

- Teams wanting built-in model deployment and serving features

- Small projects that don’t require rigorous experiment management

What sets Comet apart

Comet is built for experiment-heavy workflows, where keeping careful records matters just as much as building the model. Unlike tools like MLflow that focus heavily on deployment, Comet expects you to spend more time designing, tracking, and comparing experiments. This feels natural when you're reviewing dozens or hundreds of runs, and version control is just part of daily work.

This matches well with research-driven teams or anyone with large experimentation pipelines.

Tradeoffs with Comet

By putting experiment tracking first, Comet leaves model deployment and serving out of scope. You need a separate platform if you want integrated model operations.

Pros and Cons

Pros:

- Broad compatibility with popular libraries

- Effective performance visualizations

- Robust experiment management

Cons:

- Limited offline capabilities

- UI can have a steep learning curve

- Pricing may be steep for small teams

Labellerr stands out for me because it brings automation to the toughest part of deep learning projects—turning raw data into high-quality labels. I’ve seen teams working on computer vision or NLP quickly move from manual efforts to AI-assisted annotation using its active learning and quality workflow features. What I like most is how Labellerr lets you handle large volumes of unstructured data, with audit trails and customizable review cycles that keep your datasets production-ready.

Labellerr’s Best For

- AI and data science teams handling large unstructured datasets

- Projects needing automated image, video, or text annotation

Labellerr’s Not Great For

- Simple annotation tasks where automation isn’t needed

- Teams needing deep integration with legacy MLOps platforms

What sets Labellerr apart

Labellerr is built around automation and quality control for labeling unstructured data. Unlike manual annotation systems or simpler tools like CVAT, Labellerr assumes you want to automate the bulk of data labeling while still injecting quality checks and team review where it counts. In practice, I see teams using it when large datasets or frequent model updates make manual processes impractical.

Tradeoffs with Labellerr

Labellerr optimizes for high-volume, automated annotation, but this focus can mean less flexibility or support if you mainly work on smaller, one-off projects where manual control is more important.

Pros and Cons

Pros:

- Robust project management tools

- Supports various data formats

- Efficient automation of data labeling

Cons:

- Limited flexibility in certain workflows

- Could be complicated for beginners

- May not be cost-effective for smaller projects

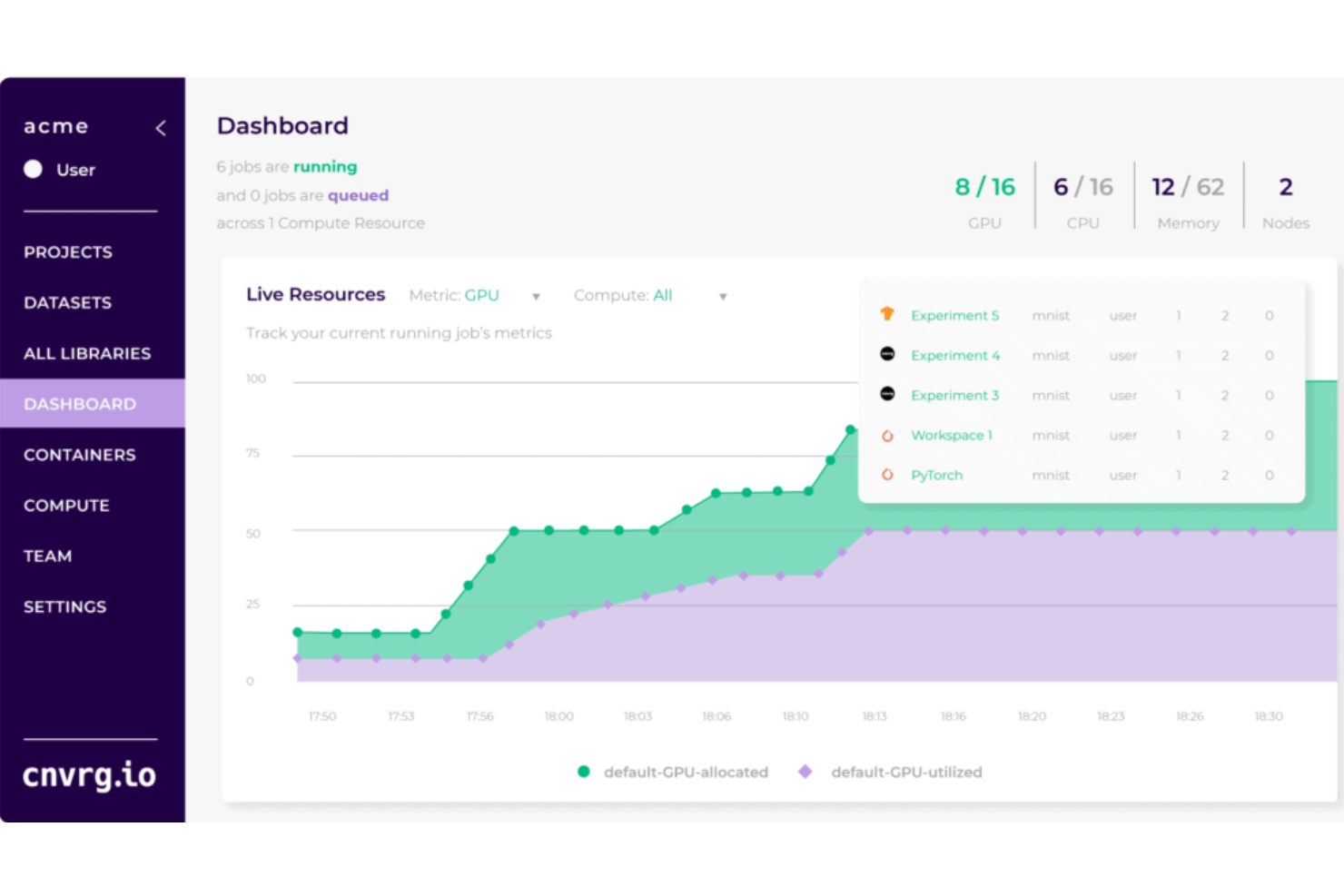

cnvrg.io makes the cut because it lets teams run, manage, and automate every part of the machine learning lifecycle from one spot. I picked it for organizations ready to grow out of scattered Jupyter notebooks and spotty pipeline scripts, especially if you need versioning and experiment tracking on real collaborative projects.

What I appreciate most is how cnvrg.io supports hybrid and multi-cloud infrastructure, so training and deploying deep learning models becomes something you can scale and repeat, not just run ad hoc.

cnvrg.io’s Best For

- ML engineers managing complex, multi-stage model workflows

- Organizations deploying models across hybrid or multi-cloud environments

cnvrg.io’s Not Great For

- Small teams focused on basic, low-volume ML experiments

- Anyone needing a lightweight or code-free deep learning platform

What sets cnvrg.io apart

cnvrg.io is designed for teams who need end-to-end ML workflow management across diverse infrastructure, not just simple notebook sharing or experiment management like Colab or SageMaker Studio.

It expects you to structure projects around versioning, collaboration, and repeatable pipelines that can scale, rather than just running disconnected scripts. In practice, this tends to work best when you need consistency and auditability across multiple ML projects, not just building single models.

Tradeoffs with cnvrg.io

By focusing on orchestrating large-scale, reliable workflows, cnvrg.io adds complexity, which means small experiments or quick prototyping can feel slower compared to more lightweight deep learning tools.

Pros and Cons

Pros:

- Emphasis on automation, freeing up data scientists for more complex tasks

- Robust integration capabilities

- Comprehensive management of ML workflows

Cons:

- Customization options could be more robust

- Might have a learning curve for new users

- Pricing transparency could be improved

Best for leveraging powerful GPU-accelerated AI and Deep Learning tools

NVIDIA GPU Cloud (NGC) is on my shortlist because it makes huge GPU-accelerated model workloads practical, even for fast-moving teams. I’ve used NGC to deploy containers packed with optimized frameworks, pretrained models, and real research code for computer vision and natural language processing.

What I appreciate is the curated, always-current model hub—especially when teams need enterprise-grade resources and support for production-scale deep learning.

NGC’s Best For

- Organizations running production-scale AI and deep learning models

- Teams that need access to NVIDIA-optimized frameworks and model containers

NGC’s Not Great For

- Small teams without access to GPU infrastructure

- Projects focused on simple, non-accelerated machine learning tasks

What sets NGC apart

NGC is built for teams working with deep learning models that demand GPU-optimized resources and officially maintained containers. Unlike Google Cloud Vertex AI, which tries to abstract you from the infrastructure, NGC expects you to know which frameworks and hardware you need and lets you run everything with full NVIDIA tuning.

In practice, this fits where you want granular control over acceleration and model deployment, not prepackaged workflows.

Tradeoffs with NGC

NGC optimizes for high control and NVIDIA-specific acceleration, but it requires dedicated GPU access, so smaller projects and CPU-based prototypes often end up paying for unused GPU resources or extra setup.

Pros and Cons

Pros:

- Integration with major cloud providers

- Broad selection of pre-trained models

- Powerful GPU-accelerated software

Cons:

- Requires knowledge of NVIDIA’s ecosystem

- May be overkill for smaller projects or businesses

- The cost can add up quickly with heavy usage

Wolfram Mathematica earns a spot here because it's unmatched when it comes to both symbolic and numerical computation in deep learning work. You get direct access to manipulation of mathematical expressions and seamless numeric calculations side by side, which I find critical for teams researching custom neural architectures or algorithms.

What I appreciate most is how easily you can move between analytic exploration and real-world data modeling, especially for experimental or hybrid AI workflows.

Wolfram Mathematica’s Best For

- Researchers building custom neural network models and experiments

- Deep learning workflows needing hybrid symbolic-numerical capability

Wolfram Mathematica’s Not Great For

- Teams wanting out-of-the-box deep learning pipelines

- Large-scale production deployment or distributed model serving

What sets Wolfram Mathematica apart

Mathematica stands out because it brings symbolic computation and deep learning together in a single workspace. Unlike TensorFlow or PyTorch, which expect you to build everything numerically, Mathematica lets you manipulate formulas and run experiments side by side. I see this work best when you need rapid prototyping of new math-driven models or want to interpret results symbolically.

You usually see this approach help more in research and custom AI algorithm development than production.

Tradeoffs with Wolfram Mathematica

By optimizing for flexibility and experimentation, Mathematica ends up lacking the deployment and scaling focus of dedicated deep learning frameworks. In practice, you trade production-readiness for creative freedom and advanced analysis.

Pros and Cons

Pros:

- Offers integration with other data analysis platforms

- Provides a wide array of computational tools and features

- Exceptional symbolic and numerical computation capabilities

Cons:

- Requires a steep learning curve for optimal usage

- Higher cost in comparison to some other computational tools

- The interface might be overwhelming for new users

Keras earns its spot because it’s ideal for teams who need to move quickly from idea to functional neural network models. I recommend it when you’re prototyping or iterating fast and want minimal barrier between concept and execution. I’ve found its high-level API, tight integration with TensorFlow, and modular design make it easy to test ideas or productionize workflows without much friction.

What stands out to me is how little overhead there is when shifting from a prototype to something you can deploy—especially in real-world deep learning projects where time matters.

Keras’s Best For

- Fast prototyping of deep learning models and workflows

- Researchers or engineers moving models quickly to production

Keras’s Not Great For

- Projects requiring highly customized, low-level neural network control

- Teams working outside mainstream Python and TensorFlow ecosystems

What sets Keras apart

Keras is designed for moving quickly from theory to runnable code, so you spend more time on your network logic and less on setup. Compared to PyTorch, which lets you dig deep into the details, Keras guides you toward higher-level workflows with clear structure. I find it matches best when you want to test and deploy models fast, without getting lost in configuration.

Tradeoffs with Keras

Keras optimizes for speed and simplicity, but you lose some control over lower-level model details, which limits custom architectures and fine-tuned debugging.

Pros and Cons

Pros:

- Comprehensive set of tools and features

- Extensible and highly modular

- User-friendly, enabling rapid prototyping

Cons:

- Requires understanding of underlying platforms for optimization and debugging

- Can be less efficient for models with multiple inputs/outputs

- For very specific tasks, lower-level APIs may offer more control

Appen makes my list because you get access to one of the world’s largest libraries of human-annotated data, which is tough to replicate if you’re scaling AI or deep learning projects. I’ve seen teams struggle with building diverse training datasets, and this is where Appen’s curated sets (across languages, demographics, and subject matter) make a difference.

What I like is being able to tap into domain-specific text, image, audio, and video datasets that other platforms don’t offer at this scale. I’d recommend Appen when building or testing deep learning models that demand rich, representative inputs, especially for multilingual or specialized applications.

Appen’s Best For

- AI and data science teams needing diverse, labeled training data

- Deep learning projects requiring large-scale, multilingual datasets

Appen’s Not Great For

- Teams that need custom model building tools

- Small projects with limited data requirements

What sets Appen apart

Appen is built for organizations that need human-annotated datasets at scale, without handling the logistics of data collection and labeling in-house. It expects you to design projects around real-world data, incorporating input from various demographics and languages.

Unlike platforms like Hugging Face, which provide pre-trained models and hosting, Appen focuses entirely on delivering customized, representative data for training and testing deep learning models.

Tradeoffs with Appen

Appen optimizes for depth and variety in labeled datasets, but you give up direct control over how those datasets are produced or iterated. This can slow experimentation if you need instant adjustments or niche data types.

Pros and Cons

Pros:

- Integrations with numerous machine learning platforms

- High standards for data quality and security

- Provides large, diverse, human-annotated datasets

Cons:

- The complexity of projects might affect delivery time

- Might be expensive for smaller projects or businesses

- Pricing is not transparent

Torch is on my shortlist because of the depth and flexibility it gives when working on custom algorithm development. When I’ve needed to implement complex neural architectures or test out bleeding-edge deep learning techniques, I find Torch’s extensive library support lets you actually do it without hacking things together.

I like how you can tap into both lower-level operations and ready-to-use modules, so teams with advanced needs aren’t boxed in by framework limitations. This feels right for research, academics, or any team pushing into cutting-edge AI.

Torch’s Best For

- Research teams building novel neural network architectures

- Projects needing extensive customization with deep learning libraries

Torch’s Not Great For

- Beginners looking for a simple deep learning toolkit

- Teams that want out-of-the-box AI model templates

What sets Torch apart

Torch takes a very open-ended approach, giving you direct access to model building blocks and expecting you to shape the architecture yourself. This is closer to how tools like TensorFlow operate, but Torch feels less prescriptive in how you define networks or tweak algorithms. I see researchers and engineers picking Torch when they need control at a granular level, rather than relying on the conventions of a framework like Keras.

Tradeoffs with Torch

Torch optimizes for customization and flexibility, which makes setup and experimentation slower for anyone needing simple out-of-the-box models. If you prefer starting with ready-made templates, you will probably get bogged down.

Pros and Cons

Pros:

- Strong community support

- High computational efficiency

- Extensive machine-learning libraries

Cons:

- Lack of enterprise level support

- Mostly Lua-based, less popular than Python in the data science community

- Might have a steep learning curve for beginners

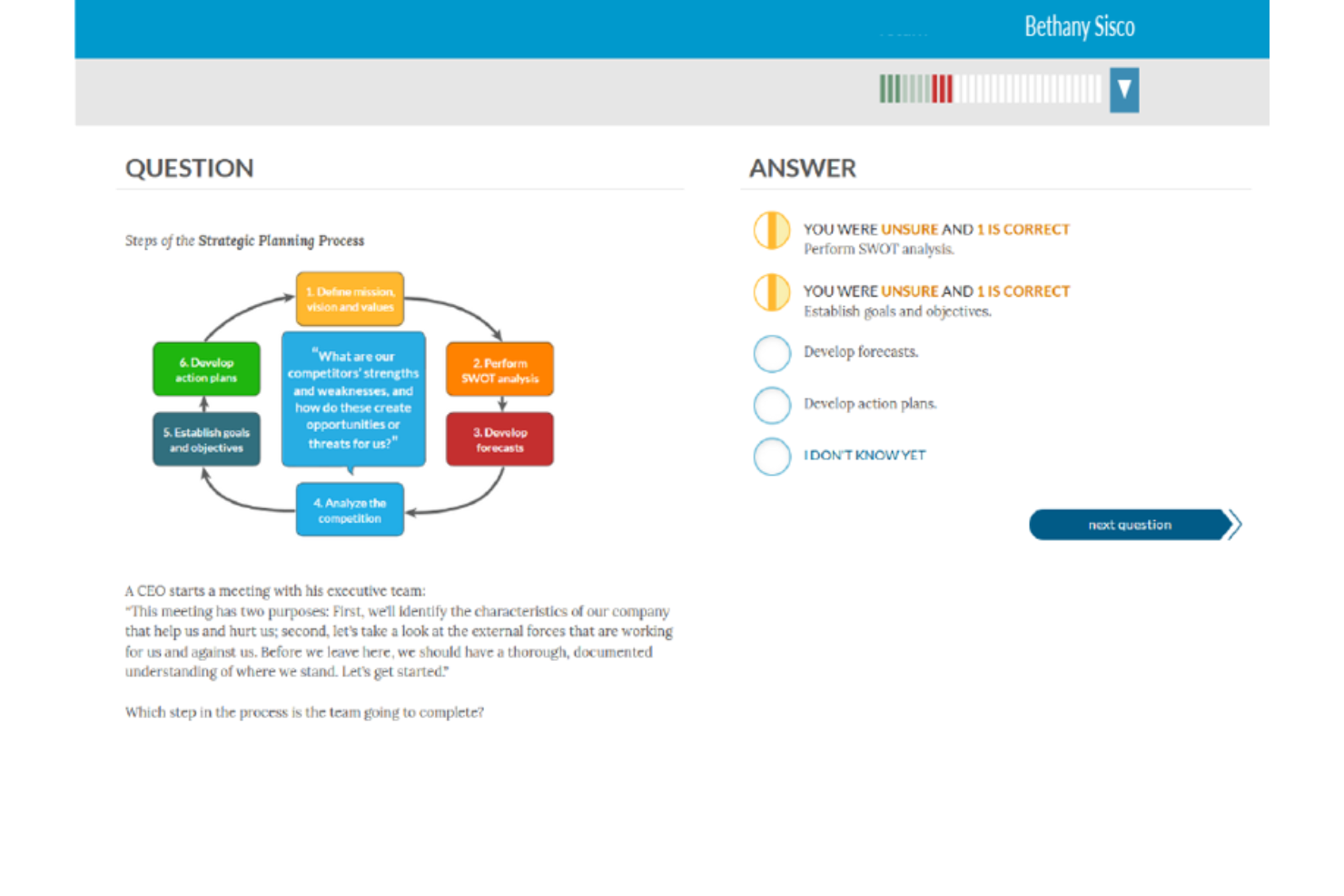

Amplifire stands out to me because it blends AI-driven adaptive learning with deep content analytics to pinpoint knowledge gaps for each learner. I recommend it when organizations want to boost learning outcomes at a system-wide scale, especially in compliance-heavy sectors like healthcare or finance.

What sets Amplifire apart is its diagnostic assessments and real-time feedback, which actually tailor the learning path based on demonstrated strengths and weaknesses. I appreciate that teams can quickly identify risk areas and track demonstrated knowledge over time, instead of just completion rates.

Amplifire’s Best For

- Large organizations needing adaptive training at scale

- Healthcare and compliance-driven sectors tracking knowledge risk

Amplifire’s Not Great For

- Research teams needing custom deep learning model building

- Small teams seeking open-source or highly customizable AI tools

What sets Amplifire apart

Amplifire approaches training as a data-driven process, adapting content automatically based on what each learner shows they know or don’t know. Unlike platforms like Coursera, which rely on broad, linear learning paths, Amplifire uses diagnostic tools up front and adapts material in real time as people work through it.

This works well for regulated industries that need proof of knowledge retention instead of just tracking completions.

Tradeoffs with Amplifire

Amplifire optimizes for adaptive content delivery and assessment, but you give up flexibility to create or tune underlying deep learning models. If you need a platform for custom AI research or development, this won’t fit that workflow.

Pros and Cons

Pros:

- Integrates with various learning management systems

- Identifies and addresses knowledge gaps

- Uses AI-driven methods to enhance learning outcomes

Cons:

- Could offer more customization options for unique learning environments

- May require training to maximize its features

- Pricing details are not transparent

Prime AI is on my list for how fast teams can plug deep learning into workflows that aren’t already built for AI. What catches my attention is its focus on ready-to-use machine learning modules and APIs that help you inject predictive models, recommenders, or customer behavior analysis into existing business processes.

I like how it fits when you want deep learning without building custom platforms from scratch. This works best for teams ready to test ML use cases and iterate quickly—especially when technical resources are limited.

Prime AI’s Best For

- Businesses wanting fast ML model deployment without deep expertise

- Teams adding AI to operations through APIs and modules

Prime AI’s Not Great For

- Research teams building custom deep learning architectures

- Organizations demanding full control over model training and tuning

What sets Prime AI apart

What I notice with Prime AI is how it’s built for businesses that want to put machine learning to work without a big research push or custom pipeline. It expects you to have an existing process, then drop in prebuilt models or predictive tools instead of building from scratch like you would with TensorFlow or PyTorch. In practice, this is good when you want a shortcut from data to deployed ML, without the overhead of a full platform.

Tradeoffs with Prime AI

Prime AI optimizes for rapid implementation and packaged models, but you lose fine-grained control over model tuning and architecture, which limits advanced experimentation.

Pros and Cons

Pros:

- Wide range of integrations

- Comprehensive support for custom model training

- Pre-trained models for quick integration

Cons:

- Steep learning curve for non-technical users

- Limited options for very specialized tasks

- Pricing could be high for small businesses

Other Deep Learning Software

Here are some additional deep learning software options that didn’t make it onto my shortlist, but are still worth checking out:

- MIPAR

For image analysis with deep learning algorithms

- Cauliflower

For intuitive AI model creation with visual interface

- DataRobot

Good for automating machine learning model building and deployment

- Valohai

Good for managing end-to-end machine learning pipelines

- Aporia

Good for monitoring and explaining AI models in production

- Caffe

Good for fast prototyping of deep learning models

- Lityx

Good for advanced analytics and marketing automation

- Neural Designer

Good for simplifying complex data analytics with neural networks

- Fixzy Assist

Good for improving maintenance processes with predictive AI

- Môveo AI

Good for optimizing logistics and supply chain operations

- PaperEntry

Good for automating data entry and digitizing paperwork

- Industrytics

Good for enabling predictive maintenance in industrial environments

- Intel Deep Learning Training Tool

Good for accelerating deep learning model training on Intel hardware

- Cognex ViDi Suite

Good for quality inspection with deep learning-based machine vision

- Diffgram

Good for improving data labeling and annotation in machine learning projects

- Lt for labs

Good for improving lab efficiency with machine learning

- Machine Learning on AWS

Good for deploying scalable machine learning solutions in the cloud

Deep Learning Software Selection Criteria

When selecting the best deep learning software to include in this list, I considered common buyer needs and pain points like managing large datasets and ensuring model accuracy. I also used the following framework to keep my evaluation structured and fair:

Core Functionality (25% of total score)

To be considered for inclusion in this list, each solution had to fulfill these common use cases:

- Data preprocessing

- Model training

- Model evaluation

- Deployment of models

- Integration with data sources

Additional Standout Features (25% of total score)

To help further narrow down the competition, I also looked for unique features, such as:

- Automated hyperparameter tuning

- Real-time analytics

- Customizable dashboards

- Multi-language support

- Edge computing capabilities

Usability (10% of total score)

To get a sense of the usability of each system, I considered the following:

- Intuitive interface design

- Clear navigation paths

- Customizable user settings

- Accessibility options

- Mobile-friendly access

Onboarding (10% of total score)

To evaluate the onboarding experience for each platform, I considered the following:

- Availability of training videos

- Interactive product tours

- Comprehensive templates

- Access to webinars

- Responsive chatbots

Customer Support (10% of total score)

To assess each software provider’s customer support services, I considered the following:

- 24/7 support availability

- Multi-channel support options

- Dedicated account managers

- Comprehensive knowledge base

- Quick response times

Value For Money (10% of total score)

To evaluate the value for money of each platform, I considered the following:

- Competitive pricing

- Flexible subscription plans

- Free trial availability

- Transparent pricing structure

- Discounts for long-term commitments

Customer Reviews (10% of total score)

To get a sense of overall customer satisfaction, I considered the following when reading customer reviews:

- Ease of use feedback

- Performance reliability

- Customer service quality

- Feature satisfaction

- Overall value perception

How to Choose Deep Learning Software

It’s easy to get bogged down in long feature lists and complex pricing structures. To help you stay focused as you work through your unique software selection process, here’s a checklist of factors to keep in mind:

| Factor | What to Consider |

|---|---|

| Scalability | Can the software grow with your needs? Check if it supports increasing data volumes and users without losing performance. |

| Integrations | Does it connect with your existing AI software? Look for compatibility with your data sources and business applications to avoid siloed systems. |

| Customizability | Can you tailor the software to fit your workflows? Evaluate if it offers customization options for dashboards, reports, and processes. |

| Ease of use | Is it user-friendly for your team? Consider the learning curve and whether it requires extensive training to get started. |

| Implementation and onboarding | How long will it take to get up and running? Implementation within virtual reality software, for example, may take longer than traditional channels. Assess the setup process, availability of onboarding resources, and support during the initial phase. |

| Cost | Does it fit your budget? Compare pricing plans, hidden fees, and long-term costs. Check for trials to evaluate value before committing. |

| Security safeguards | How does it protect your data? Ensure it complies with industry standards and offers features like encryption and access controls. |

| Support availability | Will you have help when needed? Look for 24/7 support options and the quality of resources like documentation and community forums. |

What Is Deep Learning Software?

Deep learning software is a set of tools designed to create, train, and deploy artificial neural networks for solutions like image recognition and conversational intelligence software. Data scientists, machine learning engineers, and researchers generally use these tools to enhance predictive modeling and automate complex data analysis. Data preprocessing, model training, and integration capabilities help with managing datasets and improving model accuracy. Overall, these tools offer significant value by simplifying complex data-driven tasks and improving decision-making processes.

Features

When selecting deep learning software, keep an eye out for the following key features:

- Model training: Facilitates the development of neural networks by providing tools for setting parameters and optimizing models.

- Integration capabilities: Allows seamless connection with existing data sources and business applications for efficient data flow. This can be even more crucial for companies utilizing image recognition software to ensure accuracy in real time.

- Customization options: Offers flexibility to tailor dashboards, reports, and processes to fit specific workflows and needs.

- User-friendly interface: Ensures ease of use, reducing the learning curve and making the software accessible to various team members.

- Security safeguards: Provides data protection through encryption and access controls, ensuring compliance with industry standards.

- Automated hyperparameter tuning: Enhances model performance by automatically adjusting parameters for optimal results.

- Data preprocessing: Simplifies the cleaning and organizing of data, ensuring quality inputs for model training of tools such as NLP software.

- Real-time analytics: Delivers instant insights from data, supporting quick decision-making and responsiveness.

- Mobile access: Enables users to interact with the software on-the-go, increasing flexibility and accessibility.

- Training resources: Includes tutorials, webinars, and documentation to support users in learning and utilizing the software effectively.

Benefits

Implementing deep learning software provides several benefits for your team and your business. Here are a few you can look forward to:

- Improved accuracy: Enhances predictive modeling with precise data analysis and model training capabilities.

- Efficiency in data handling: Automates data preprocessing tasks, saving time and reducing manual errors.

- Scalable solutions: Supports growth with features that accommodate increasing data volumes and users.

- Informed decision-making: Offers real-time analytics for quick insights and responsive business strategies.

- Customization for specific needs: Adapts to your workflows with customizable dashboards and processes.

- Enhanced data security: Protects sensitive information with security safeguards like encryption and access controls.

- Accessibility and flexibility: Provides mobile access, allowing users to work from anywhere and stay connected.

Costs and Pricing

Selecting deep learning software requires an understanding of the various pricing models and plans available. Costs vary based on features, team size, add-ons, and more. The table below summarizes common plans, their average prices, and typical features included in deep learning software solutions:

Plan Comparison Table for Deep Learning Software

| Plan Type | Average Price | Common Features |

|---|---|---|

| Free Plan | $0 | Basic model training, limited data storage, and community support. |

| Personal Plan | $10-$30/user/ month | Data preprocessing, standard analytics, and email support. |

| Business Plan | $50-$100/user/month | Advanced analytics, integration capabilities, and priority support. |

| Enterprise Plan | $150-$300/user/month | Customizable solutions, dedicated account management, and enhanced security. |

Deep Learning Software FAQs

Here are some answers to common questions about deep learning software:

What are the hardware requirements for running deep learning software?

Deep learning software often requires powerful hardware to process large datasets efficiently. High-performance GPUs, ample RAM, and fast storage solutions are typically recommended. Check the software’s documentation for specific hardware recommendations to optimize performance and avoid bottlenecks.

Is it possible to customize the algorithms in deep learning software?

Many deep learning software solutions allow you to customize algorithms to fit your specific needs. Look for tools that offer flexible neural network architectures and the ability to modify parameters. This customization can enhance model accuracy and relevance to your particular use case.

Can deep learning software integrate with existing IT infrastructure?

Yes, most deep learning software solutions offer integration capabilities with existing IT infrastructure. You should verify that the tool supports APIs or has built-in connectors for your current systems, such as databases and cloud services, to ensure smooth data flow and compatibility.

What’s Next:

If you're in the process of researching deep learning software, connect with a SoftwareSelect advisor for free recommendations.

You fill out a form and have a quick chat where they get into the specifics of your needs. Then you'll get a shortlist of software to review. They'll even support you through the entire buying process, including price negotiations.