Databricks Review 2026: Pros, Cons, Features, and Pricing

Databricks Unified Data Analytics is a cloud-based DataOps tool designed to help teams manage, process, and analyze large-scale data across complex environments. For IT specialists juggling fragmented data pipelines, security requirements, and the need for automation, Databricks offers a unified workspace that brings together data engineering, analytics, and machine learning — acting as a data lakehouse.

In this review, you’ll find a detailed look at Databricks Unified Data Analytics’s features, best and worst use cases, pros and cons, and pricing—so you can decide if it fits your team’s dataops strategy.

Databricks Evaluation Summary

- Usage-based pricing

- Free trial + free demo available

Why Trust Our Software Reviews

We’ve been testing and reviewing software since 2023. As tech leaders ourselves, we know how critical and difficult it is to make the right decision when selecting software.

We invest in deep research to help our audience make better software purchasing decisions. We’ve tested more than 2,000 tools for different tech use cases and written over 1,000 comprehensive software reviews. Learn how we stay transparent & our software review methodology.

Databricks Overview

If you’re judging Databricks as a DataOps tools, its collaborative workspace, strong Spark integration, and scalable cloud architecture set it apart for teams handling high-volume, complex data. Pricing can be steep and onboarding may challenge smaller teams, but its automation, broad language support, and deep integration options make it a top pick for enterprises prioritizing advanced analytics and machine learning. If you’re selecting a platform for cross-functional data teams or hybrid cloud environments, Databricks often outperforms on flexibility and performance, though simpler use cases may find the interface and setup more than they need.

pros

-

Scales efficiently for large, distributed data workloads.

-

Supports collaborative workflows for data engineering and analytics.

-

Automates cluster management and resource optimization.

cons

-

Pricing can be unpredictable for high-volume workloads.

-

Requires strong Spark knowledge for advanced use.

-

Limited built-in data quality monitoring features.

-

Freshservice

Visit WebsiteThis is an aggregated rating for this tool including ratings from Crozdesk users and ratings from other sites.4.6 -

Deel IT

Visit WebsiteThis is an aggregated rating for this tool including ratings from Crozdesk users and ratings from other sites.4.9 -

Rippling IT

Visit WebsiteThis is an aggregated rating for this tool including ratings from Crozdesk users and ratings from other sites.4.8

Our Review Methodology

How We Test & Score Tools

We’ve spent years building, refining, and improving our software testing and scoring system. The rubric is designed to capture the nuances of software selection and what makes a tool effective, focusing on critical aspects of the decision-making process.

Below, you can see exactly how our testing and scoring works across seven criteria. It allows us to provide an unbiased evaluation of the software based on core functionality, standout features, ease of use, onboarding, customer support, integrations, customer reviews, and value for money.

Core Functionality (25% of final scoring)

The starting point of our evaluation is always the core functionality of the tool. Does it have the basic features and functions that a user would expect to see? Are any of those core features locked to higher-tiered pricing plans? At its core, we expect a tool to stand up against the baseline capabilities of its competitors.

Standout Features (25% of final scoring)

Next, we evaluate uncommon standout features that go above and beyond the core functionality typically found in tools of its kind. A high score reflects specialized or unique features that make the product faster, more efficient, or offer additional value to the user.

We also evaluate how easy it is to integrate with other tools typically found in the tech stack to expand the functionality and utility of the software. Tools offering plentiful native integrations, 3rd party connections, and API access to build custom integrations score best.

Ease of Use (10% of final scoring)

We consider how quick and easy it is to execute the tasks defined in the core functionality using the tool. High scoring software is well designed, intuitive to use, offers mobile apps, provides templates, and makes relatively complex tasks seem simple.

Onboarding (10% of final scoring)

We know how important rapid team adoption is for a new platform, so we evaluate how easy it is to learn and use a tool with minimal training. We evaluate how quickly a team member can get set up and start using the tool with no experience. High scoring solutions indicate little or no support is required.

Customer Support (10% of final scoring)

We review how quick and easy it is to get unstuck and find help by phone, live chat, or knowledge base. Tools and companies that provide real-time support score best, while chatbots score worst.

Customer Reviews (10% of final scoring)

Beyond our own testing and evaluation, we consider the net promoter score from current and past customers. We review their likelihood, given the option, to choose the tool again for the core functionality. A high scoring software reflects a high net promoter score from current or past customers.

Value for Money (10% of final scoring)

Lastly, in consideration of all the other criteria, we review the average price of entry level plans against the core features and consider the value of the other evaluation criteria. Software that delivers more, for less, will score higher.

Core Features

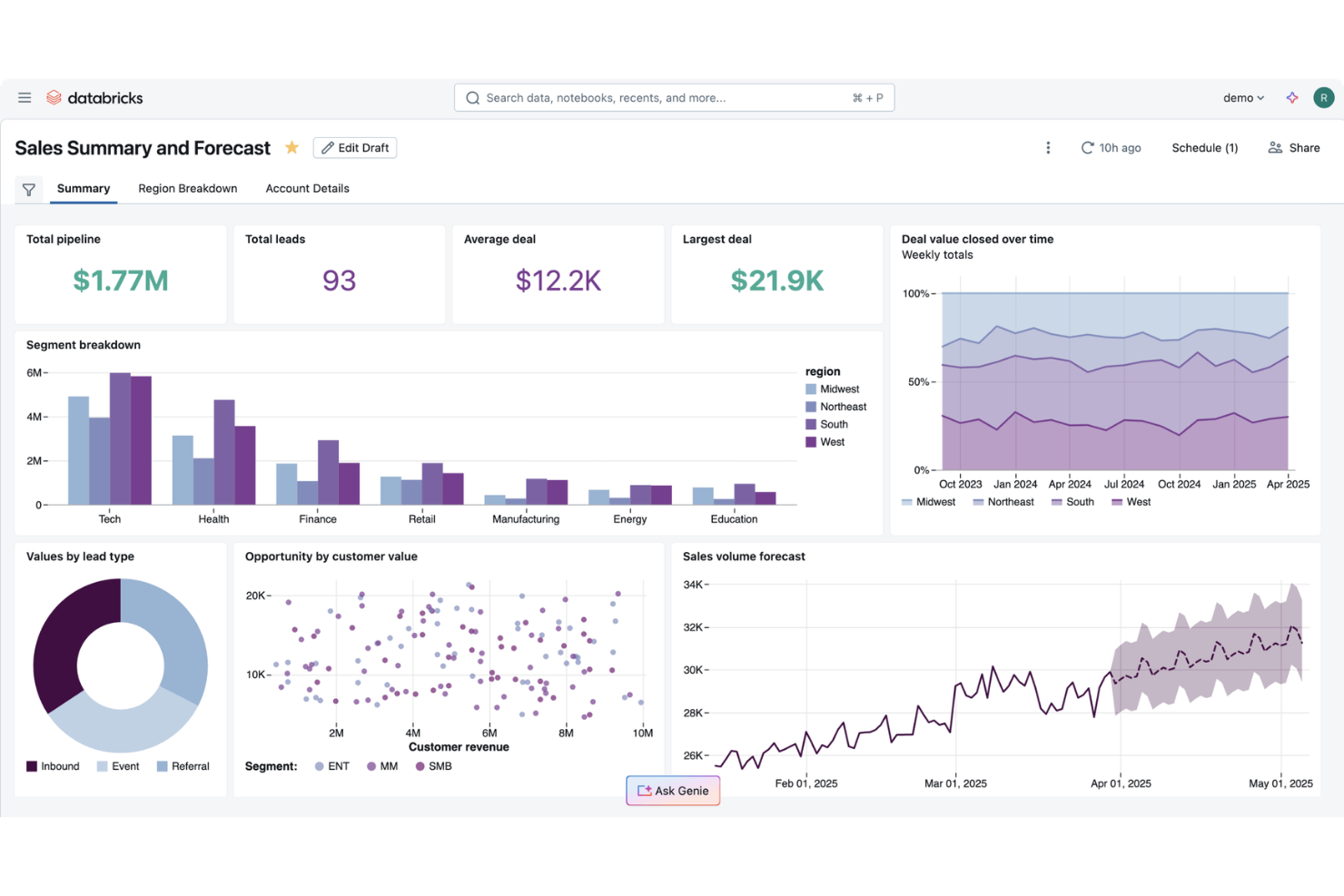

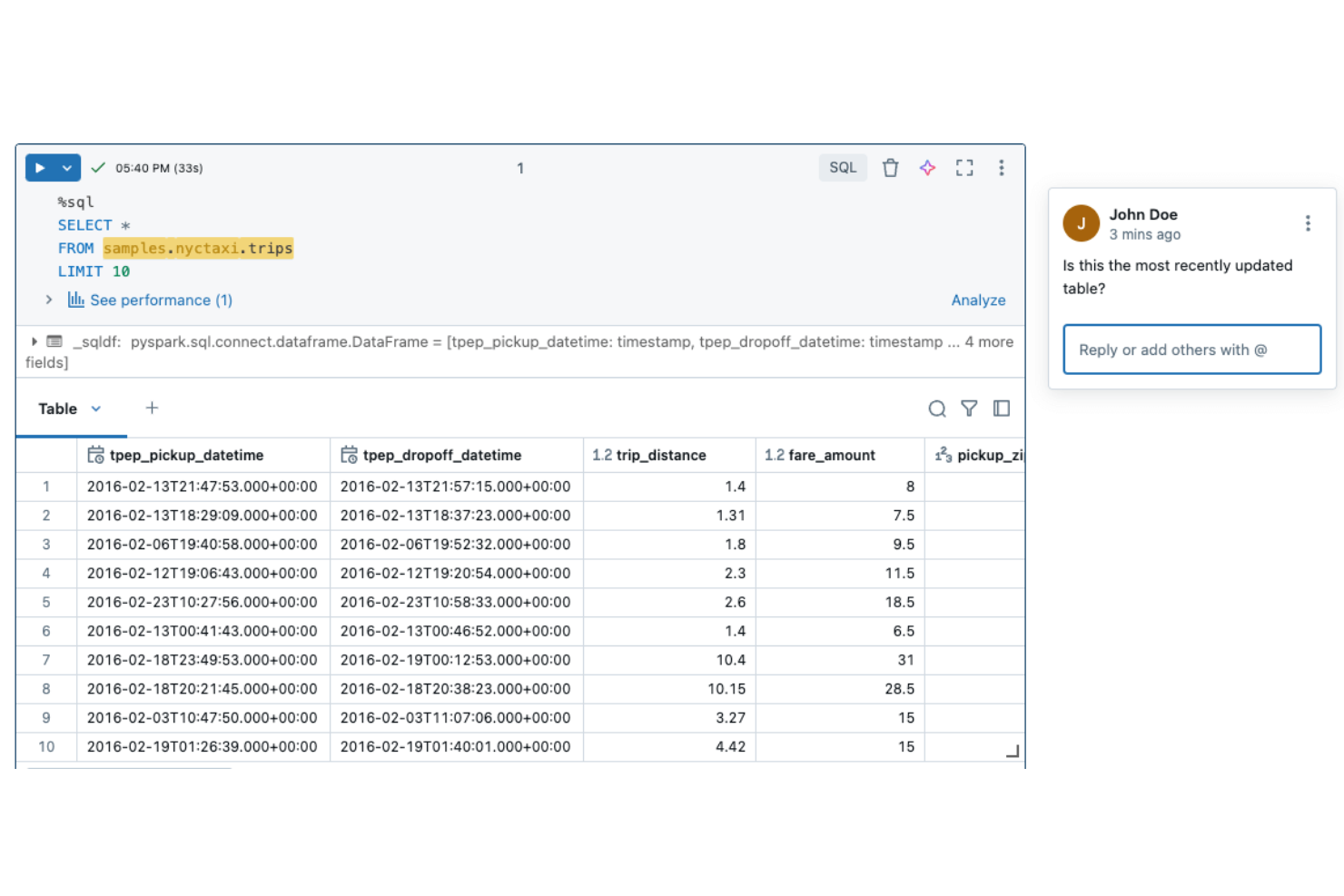

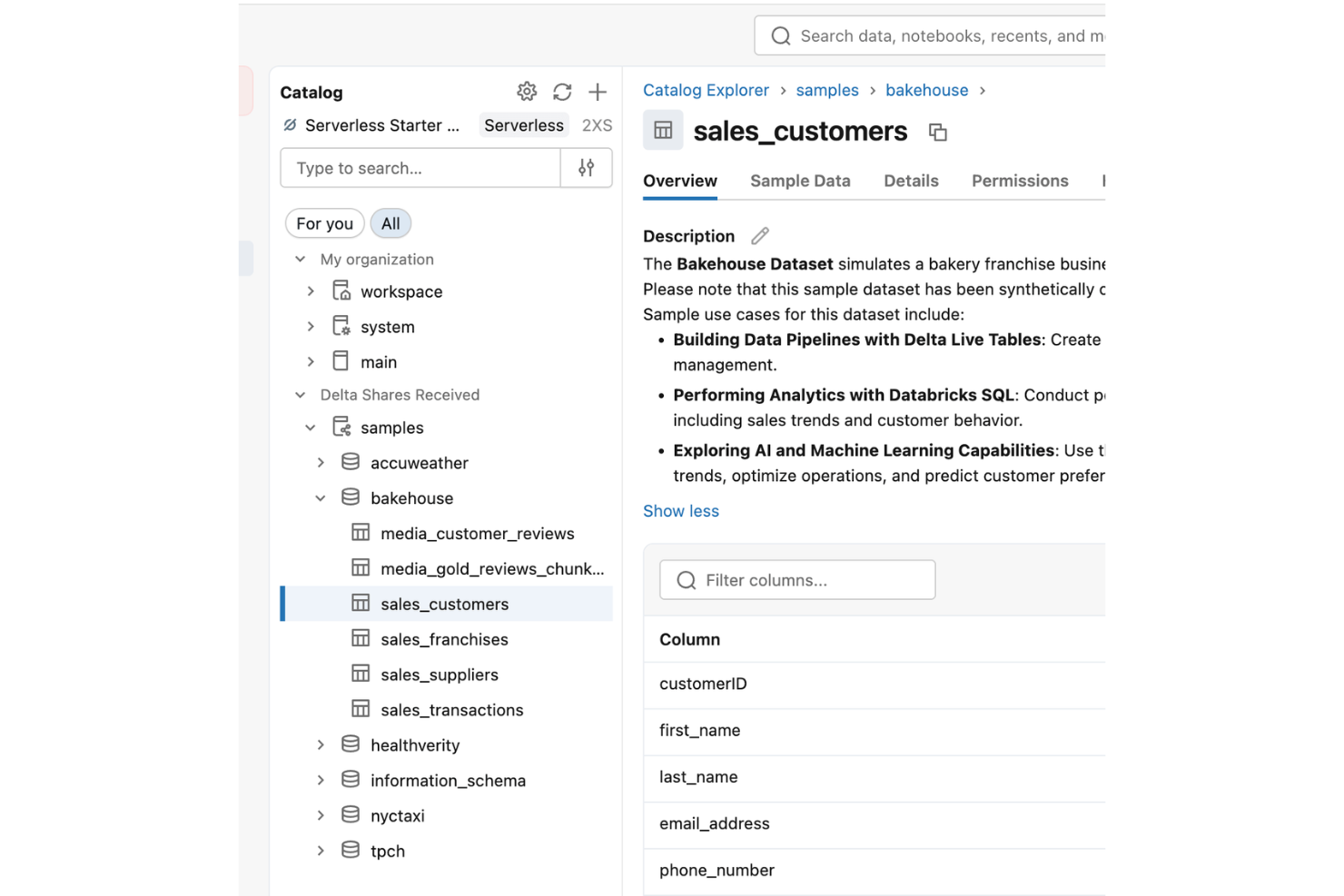

Collaborative Workspace

Share notebooks, dashboards, and code in real time across teams. This supports version control and collaboration on data projects, with built-in functions that streamline reusable logic.

Automated Cluster Management

Provision, scale, and terminate compute clusters automatically based on workload needs. This reduces manual resource management and helps control costs.

Delta Lake Support

Store and manage data in an open, ACID-compliant format for reliability across data lakes. Delta Lake enables time travel, schema enforcement, and scalable data pipelines.

Job Scheduling and Orchestration

Schedule, monitor, and manage data pipelines and workflows from a unified interface. This feature automates recurring tasks and supports complex dependencies.

Built-In Machine Learning Tools

Access MLflow and integrated libraries for model tracking, training, and deployment. This streamlines the machine learning lifecycle within the same platform.

Advanced Security and Compliance

Apply fine-grained access controls, data encryption, and audit logging. These capabilities help meet regulatory requirements and protect sensitive data.

Ease of Use

Databricks offers a polished interface and strong documentation, but its advanced features and Spark-centric workflows can be daunting for new users or teams without deep technical expertise. Many users appreciate the collaborative workspace and automation, yet setup and pipeline management often require specialized knowledge.

Compared to simpler dataops tools, Databricks demands more upfront investment in learning, but rewards experienced teams with powerful control and scalability.

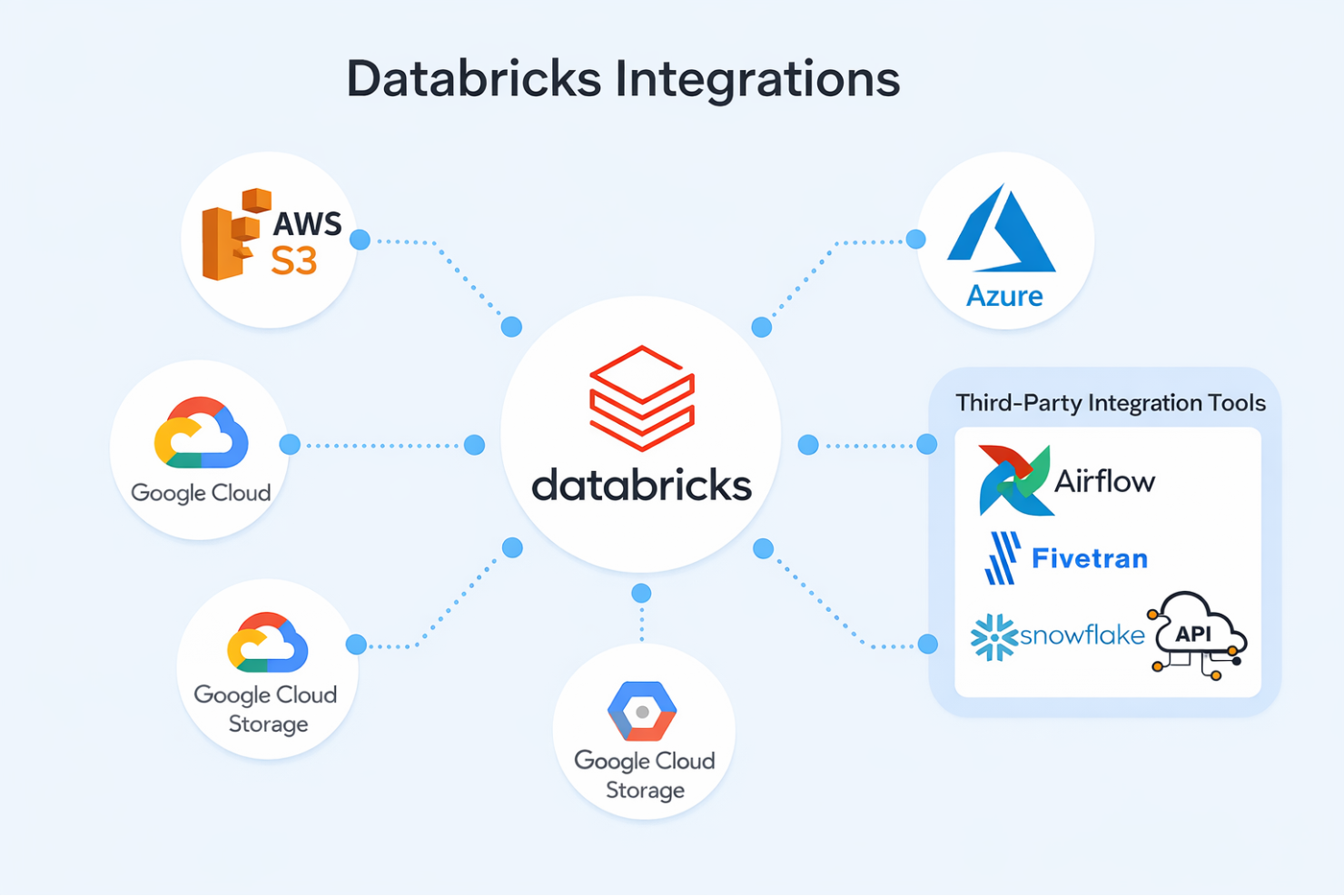

Integrations

Databricks integrates with AWS S3, Azure, Google Cloud Storage, and Snowflake, among others.

The platform also offers a robust API and supports connections with third-party integration tools for custom workflows.

Databricks Specs

- A/B Testing

- AI Integration

- Analytics

- API

- Comparative Reporting

- Conversion Tracking

- Custom Reports

- Dashboard

- Dashboards

- Data Export

- Data Import

- Data Mining

- Data Visualization

- External Integrations

- Feedback Management

- Forecasting

- Historical Data Analysis

- Keyword Tracking

- Link Tracking

- Multi-Site

- Multi-User

- Notifications

- Process Reporting

- Real-time Alerts

- Referral Tracking

- Reports

- Scenario Planning

- SEO

- Time Series Modeling

- Visualization

- Workflow Management