We’ve entered the era of vision-native infrastructure. Drawing from more than 200,000 projects, 1 billion training images, 250,000 fine-tuned models, and 55 billion predictions per year, Roboflow’s 2026 Visual AI Trends Report reveals the most successful teams aren’t treating computer vision as a one-off project - they’re treating it almost like CI/CD for the physical world.

They are integrating computer vision into their broader operational stack, connecting model outputs directly to production line controls, inventory systems, maintenance workflows, and quality processes. That integration is what drives measurable outcomes such as reduced downtime, consistent quality, lower operational risk, and faster response to issues on the floor.

If you’re looking for a north star project that delivers immediate, measurable ROI while actually fitting into a modern, scalable technical stack, this is how the world's leading manufacturing teams are deploying vision AI at scale today.

How Leading Industries Are Using Roboflow’s Vision AI

Across manufacturing, logistics, and consumer goods, the vision AI deployments that deliver the strongest long-term return tend to follow the same pattern. Models run inference at the edge so the system can respond quickly and meet real-time latency needs. The outputs are connected directly to downstream control systems, so detections lead to actions instead of just alerts. Teams also build active learning loops that bring new production data back into the training process, allowing the model to improve continuously as conditions change. This pattern can be seen clearly in real deployments from companies such as USG Corporation, Almond, and BNSF Railway.

1. Industrial Manufacturing: The Visual System of Record

In industrial manufacturing, defect detection and quality inspection account for about 68% of manufacturing vision deployments. In most implementations, the technical goal is not just to flag defects for human review, but also to close the loop between detection and response. When a model detects an anomaly, the system triggers a deterministic action such as diverting a product, halting a line, or signaling a PLC.

A vision system that only generates alerts for a quality manager to review functions mainly as a monitoring tool. A vision system that sends a signal directly to a PLC and automatically diverts a product becomes part of the operational infrastructure. These two architectures produce very different ROI outcomes, and the gap becomes even larger as deployments scale across production lines.

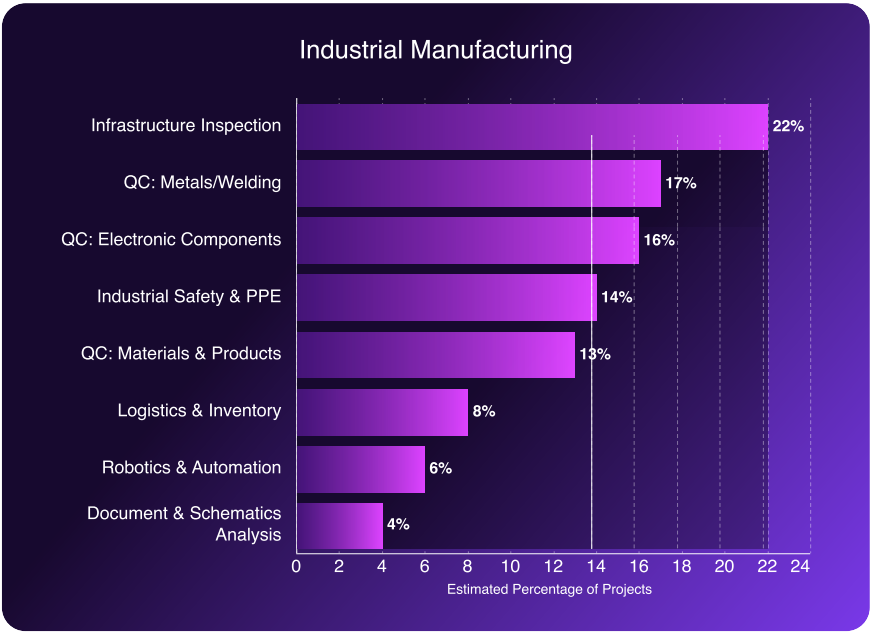

The Strategic Priorities:

- Quality Control (46%): Standardizing inspection criteria digitally across multiple global sites, reducing variability caused by operator-dependent judgment.

- Infrastructure Inspection (22%): Automated detection of cracks, leaks, and mechanical wear on equipment before they lead to unplanned downtime, supporting a shift from scheduled to predictive maintenance.

- Safety and Compliance (14%): Real-time monitoring of workplace conditions to ensure that operational rules and safety standards are being followed, such as detecting PPE violations or identifying personnel entering restricted zones and triggering automated alerts or access controls.

Case in Point: AI Reduces Subjectivity from Quality Checks at USG

USG Corporation, the largest drywall manufacturer in North America, needed consistent quality inspection across 50 manufacturing sites. The previous process relied on individual operators making subjective judgment calls on the production floor. Inspection outcomes varied between sites and between shifts, which made it difficult to enforce a single quality standard across the organization.

USG deployed a single versioned model across all 50 sites using Roboflow Inference, eliminating the need to build and maintain separate pipelines for each facility. When the model detects a board misalignment that is likely to cause a downstream jam, it sends a low-latency signal directly to the facility’s PLC to reroute the product automatically. The decision happens at machine speed, without waiting for a human to review an alert.

The outcome is a quality process that applies the same standard across every site and every shift. Inspection results are no longer dependent on which operator is on duty. The model output drives a physical action, which is what distinguishes a production-grade vision system from a monitoring dashboard.

2. Consumer Goods: Deployment Velocity and Edge Autonomy

Consumer goods production lines run at speeds where cloud-based inference is not a practical option. When products are moving at 100+ FPS, a network round-trip to a remote server introduces inference latency that makes real-time defect detection impossible. By the time a cloud response arrives, the product in question has already passed through multiple downstream stations. Edge deployment is not an optimization in this context; it is a hard requirement.

Manufacturing, packaging, and processing quality control together account for 63% of consumer goods vision deployments. The teams running these systems successfully are deploying models directly on edge devices, keeping inference latency in the sub-millisecond range, and eliminating any dependency on network availability.

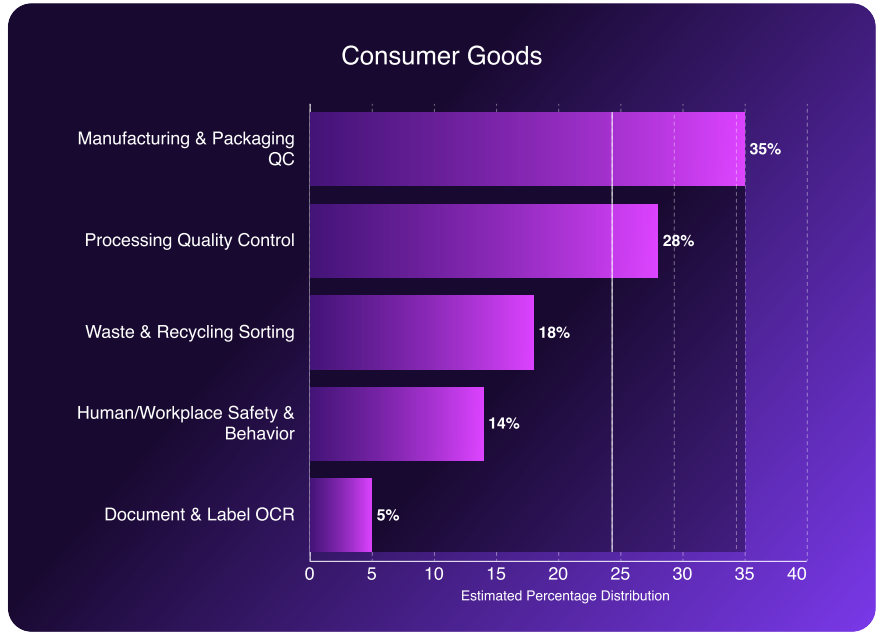

The Strategic Priorities:

- Packaging QC (35%): Real-time verification of label placement, print quality, and seal integrity at production line speed, before products are packaged and shipped

- Process Quality (28%): Automated inspection of textiles, food products, and other processed goods for surface defects or contamination while items are in motion

- Automated Sorting (18%): High-throughput classification and separation of materials in recycling and waste streams, replacing manual sorting processes that cannot scale to production volumes

Case in Point: Almond

Traditional industrial robots operate well in fixed, high-volume environments where product type and position are consistent. When product variety increases, as in high-mix picking operations, conventional robots struggle because they lack the visual understanding needed to adapt to changing inputs. Almond built a vision-powered robotic picking system specifically designed to handle this variability.

Switching to RF-DETR, a transformer-based object detection architecture optimized for real-time edge performance, increased Almond’s picking accuracy from 67% to 81%. That improvement moves the system from marginal to commercially viable across a much broader range of product types.

The system runs fully on-premise using the Roboflow Inference Server. Inference is local, so response times are deterministic and not subject to network variability. Facility video data stays on-site, which addresses data sovereignty requirements that are often a procurement blocker in enterprise industrial environments.

3. Logistics and Robotics: The MLOps Flywheel

A common challenge in logistics and robotics deployments is model performance degradation after launch. A model that performs well during training and validation will eventually face new conditions in production that were not part of the original training data. For example, product packaging may change, or layouts in the warehouse may be updated. When these changes are not incorporated back into the model, performance slowly declines, and the problem may remain unnoticed until it begins to affect operations.

This is usually not a model quality problem but a system architecture problem. The practical solution is to design the deployment with continuous retraining in mind from the beginning, rather than treating retraining as a later maintenance task. Systems that require a large manual retraining project every few months become expensive to operate and difficult to scale.

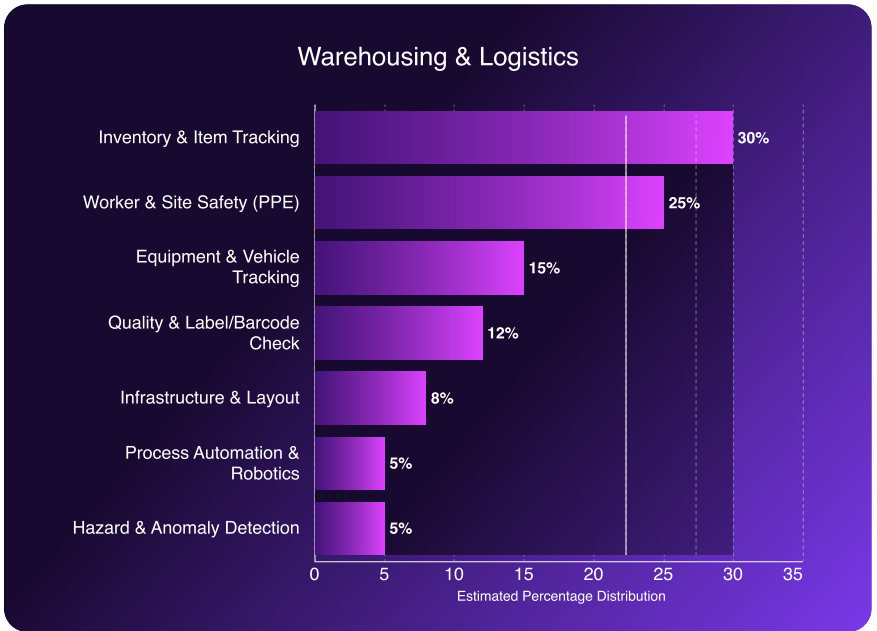

In warehouse environments, inventory and item tracking represent the largest use case at around 30%, followed by worker safety and PPE monitoring at about 25%. Many of these systems run on existing overhead camera infrastructure, so additional hardware investment is relatively small. The main operational challenge is maintaining model accuracy as the warehouse environment gradually changes over time.

The Strategic Priorities:

- Autonomous Inventory Tracking (30%): Continuous SKU-level counting using existing overhead cameras, replacing periodic manual cycle counts with real-time inventory visibility.

- Schematic Verification (12%): Automated comparison of physical pallet builds and assembly configurations with digital specifications, helping detect discrepancies before products leave the facility.

Case in Point: BNSF Railway

BNSF, a Berkshire Hathaway subsidiary, operates one of the largest freight rail networks in North America. Managing a network that spans about 32,500 miles makes large-scale manual inspection extremely difficult. The company uses computer vision built with Roboflow to automate inventory tracking in intermodal yards and to inspect train wheels at important points across the rail network.

As Asim Ghanchi, BNSF’s AVP of Technology, explains, “Achieving positive results using AI in a lab environment is easy, but the real challenge comes when scaling the solution across a network like ours without disrupting day-to-day operations.”

To build and deploy these systems, Roboflow provides capabilities such as labeling and dataset optimization, hosted model training, and edge AI inference so models can run close to where inspections happen. Human-in-the-loop workflows are also used to improve datasets over time. When the model encounters a novel edge case or produces a low-confidence detection, the system can automatically sample that data and send it for human review. Once labeled, those examples can be added back into the training dataset, helping the model adapt as real-world conditions change.

Deploying vision systems in these environments requires models that can operate reliably across different locations and changing conditions. Lighting varies across regions and seasons, camera setups may differ between sites, and weather conditions can range from extreme heat to sub-zero temperatures. The real challenge is not just building a model that works in testing, but deploying a system that continues to perform consistently across a large and complex operational network.

By automating tasks such as asset inspection and yard inventory monitoring, computer vision helps the company improve visibility into operations and detect potential issues earlier across its rail infrastructure.

Vision as Your Competitive AI Advantage

In 2026, the competitive gap is no longer simply between companies that use AI and those that do not. The more meaningful difference is between organizations that run a small, isolated AI project and those that build fully functional and reliable computer vision into their operational systems.

The deployments with the strongest long-term results are built as complete systems, rather than standalone models. Inference runs at the edge so decisions can happen in real time. Human-in-the-loop workflows help teams review uncertain cases and add new data back into the training dataset. Integration with downstream systems ensures that detections trigger actions such as alerts, process adjustments, or control signals.

The goal is to create a vision system that continues to perform as real world conditions change. Lighting shifts, products evolve, camera hardware varies across locations, and production environments rarely remain static. A production deployment must handle these changes while scaling across sites without constant manual maintenance.

This is why Roboflow has become the industry standard. Whether you’re deploying RF-DETR at the far edge to catch defects at 100+ FPS or orchestrating an active learning flywheel across thirty thousand miles of rail, Roboflow’s vision AI platform is the foundation that makes it possible for over half the Fortune 100 today.

The physical world is ready to be digitized. Build the future with Roboflow.