If you have rolled out AI coding tools in the last year, you are probably seeing the same thing we hear from engineering teams everywhere: the tools are working. Features ship faster. Developers are more productive. The output is genuinely remarkable.

And yet something still feels stuck. The engineers you most wanted to free up for harder problems are stretched thin. Deployments surface a wave of bugs that takes days to work through. The velocity gains are real, but they are not compounding the way they should.

This is not a problem with AI coding. It is a sign that AI coding is working so well that it has outpaced the infrastructure around it. AI-generated code produces roughly 1.7 times more errors than human-written code, and code review time across the industry has increased by 93%. The generation side of the workflow has been transformed. The verification side has not kept up.

Closing that gap is not about slowing down, it’s about building the next layer of AI infrastructure that lets teams accelerate further.

The bottleneck has moved, but it has not disappeared

The original promise of AI coding was simple: developers spend too much time writing boilerplate. Let the AI handle that, and engineers can focus on harder problems. It worked. But the assumption embedded in that promise was wrong.

The assumption was that writing code was the bottleneck. It was not. The bottleneck was always the full cycle: write, verify, deploy, discover, debug, fix, verify again. AI accelerated one part of that loop dramatically while leaving the rest untouched. The result is a backlog problem that looks different but is fundamentally the same: engineering capacity is constrained, and the constraint has simply moved downstream.

Your coding agent can draft a feature in minutes. But before that feature ships, someone still has to verify it works—not just in unit tests, but across the full system: database queries, third party API behavior, configuration state, permission layers, edge cases from real user patterns. That someone is usually your most experienced engineer, and they are now doing more of this work, not less.

And then the code deploys. What follows is a phase most engineering leaders know well: a concentrated period of bug hunting as issues that were invisible in review surface under real conditions. For every two steps forward, teams find themselves taking one back, firefighting regressions instead of building what is next. The solution is not to slow the generation side down, it’s to bring the verification side up to speed.

Completing the loop

The answer is to bring the same automation logic to verification that already exists on the generation side. Not a copilot that waits for a developer to ask it to write a test. An autonomous agent that verifies code continuously, at every stage of the lifecycle, without anyone prompting it to start.

This is what we built Checksum to do. Checksum is a continuous quality platform: an always-on layer that sits alongside your CI/CD pipeline and your coding agents, autonomously generating, running, and maintaining tests, so that by the time a pull request reaches a human reviewer, it has already been executed against realistic production conditions.

The practical result is that the prompt-test-prompt cycle that consumes so much engineering time either disappears or runs between machines. Your engineers stop being the verification layer and go back to being builders.

How it works across the development lifecycle

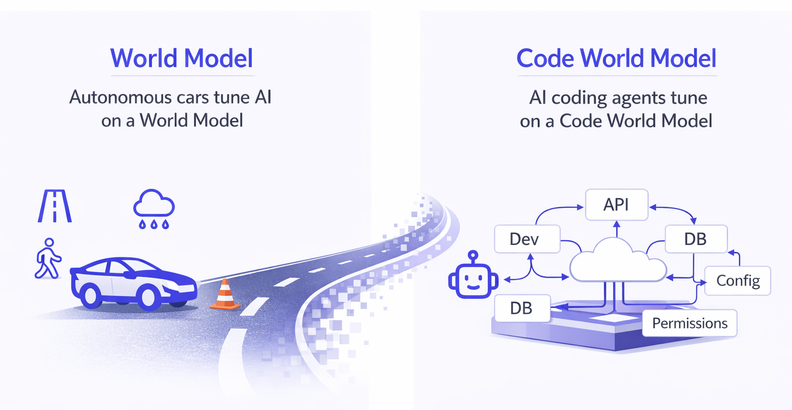

Checksum's agents are built on what we call the Code World Model: a simulation of the digital environment your software actually runs in. Rather than testing code in isolation, it accounts for database states, API behaviors, configuration files, permission layers, and real user interaction patterns: the full context that determines whether software works in production. That foundation is what makes the agents below meaningfully different from conventional testing tools.

Checksum covers three layers that together address the full verification gap.

End-to-end testing. The E2E agent builds a graph of your entire application, maps every screen, interaction, and flow, and generates production-ready Playwright tests. Those tests live in your repository as code you own outright, with no vendor lock-in. When your UI evolves, the agent heals the affected tests automatically. Teams that previously spent weeks building and maintaining test suites get that time back.

CI validation. The CI agent generates 50 to 200 targeted tests per pull request, specific to the code that changed. It sets up the necessary infrastructure automatically, runs inside your existing CI pipeline using your existing frameworks, and catches logic bugs that static analysis misses entirely. Every PR gets executed before it is reviewed.

API coverage. The API agent analyzes every endpoint, parameter, header, and payload structure in your API, generating tests that verify end-to-end flows across multiple systems, not just whether an endpoint returns 200. Feed it OpenAPI specs, Swagger docs, or capture real sessions via SDK.

All three agents integrate directly with Cursor, Claude Code, and over a hundred other AI coding tools via slash commands. Your existing stack stays in place, and verification gets built into the loop rather than bolted on at the end.

What teams using Checksum are seeing

Clearpoint Strategy builds strategic planning and reporting software for large enterprises, a product category where release quality is non-negotiable. Their engineering team was caught in a familiar trap: the test suite could not keep up with the pace of development, manual validation kept falling to engineers who should have been building, and bugs were making it to customers they could not afford to disappoint.

Working with Checksum, they went from an unreliable suite to more than 250 end-to-end tests in under a month, all running automatically inside their existing pipeline. When UI changes broke tests, the agent repaired them without anyone filing a ticket. The team now catches six critical bugs per week before they ship, and has recouped $500K annually that was previously absorbed by manual testing overhead.

Postilize is a fast-moving AI SaaS company, and for a while their release process reflected that: ship fast, fix what breaks, repeat. As the platform grew more complex, the cycle became unsustainable. Every two steps forward meant a step back to fight regressions, and the constant context switching between new feature work and bug cleanup was eroding the team's ability to execute on their actual roadmap.

After implementing Checksum, every pull request gets automatically tested before it reaches production, and the test suite adapts as new features ship rather than accumulating debt. The results were a 70% reduction in bugs and engineering cycles that run 30% faster, with zero test flakiness. Shipping to production daily went from an aspiration to a routine.

Accelerating the full loop

The teams pulling ahead right now are not the ones who adopted AI coding earliest. They are the ones who closed the loop, pairing fast generation with automated verification so the gains on one side compound instead of creating drag on the other.

AI coding has transformed how software gets written. The Code World Model is what transforms how it gets verified. Together, they make the full cycle—from prompt to production—something that moves at the speed AI was always supposed to unlock.

See how Checksum works with your stack at checksum.ai